May 20, 2013 , by

Public Summary Month 5/2013

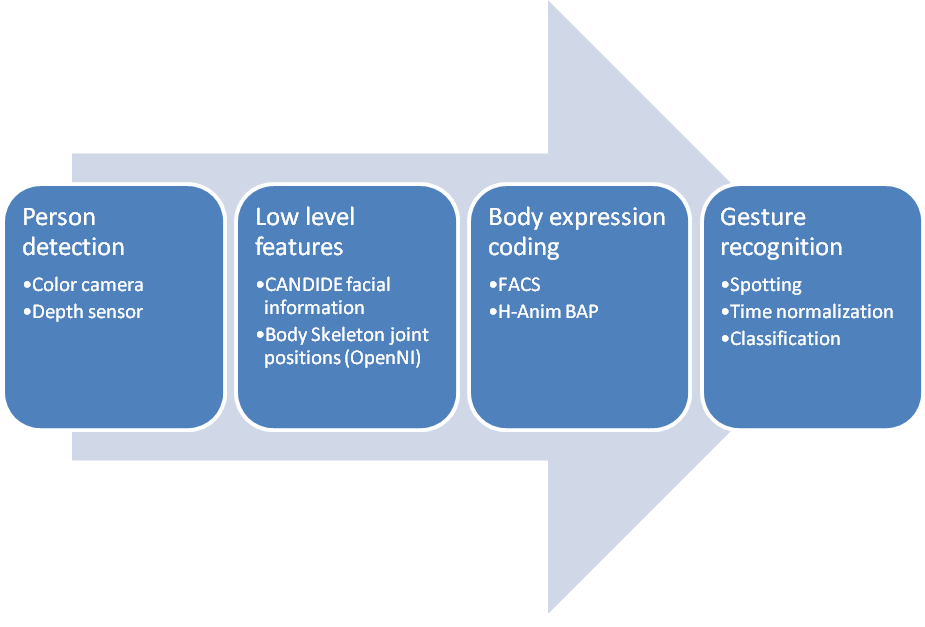

At the current stage of the project several incremental milestones have been achieved. First of all, robust person detection, combining several computer vision techniques. Secondly, face, facial feature and facial expression detection and tracking. Then, body part detection, tracking and body expression coding. And finally perform the user body expression analysis for intended and non-intended gestures. See Figure 1 for an overview of the process.

Figure 1: Body expression analysis procedure

The proper composition of gestures classifies to couple with intended and non-intended gestures, but especially the validation of every computer vision module separately has been the focus of work for the last two months. The details of this evaluation can be seen in the deliverable D4.1. Apart from this evaluation we show here the improvements we have added to the planned strategies for human detection and scene interpretation in the last two months.